The states are increasingly delegating a set of transcendental decisions to algorithms. In no field is this turn more visible – no more problematic – who in that of citizen security and justice. Today, algorithms are used to decide who should be arrested before trial, where police patrols should be deployed, and even what faces should be indicated as suspects. These tools promise greater coherence in decision -making and the possibility of overcoming the well -documented biases of human judgment. But they also raise difficult questions about equity, accountability and the future of democratic governance.

Algorithms are sets of rules that process information: procedures that take certain input data and produce an output. In the context of security and justice, many of the algorithms that are being implemented are based on supervised learning. These systems are trained with labeled data: past examples are used in which both the characteristics (for example, age or background of a defendant) and the results (for example, whether or not reincidated) are known. The result is a prediction: how likely a person with characteristics x o and comet another crime if we leave it on probation? What is the probability that a robbery occurs in a specific corner next week?

A promising example is the use of algorithmic risk assessment tools. These models predict the probability of recidivism or absence to a judicial hearing, using historical data to adjust their parameters. In doing so, they offer a possible correction to the failures of human judgment in decisions prior to the trial. Numerous studies have shown that the judges, despite their authority, are unable to predict the future behavior of the defendants. Their decisions can be influenced by irrelevant factors: for example, if it is almost the time of lunch or whether or not your university football team lost last weekend. They also tend to be carried away by the immediate precedent: the previous cases biased their current decisions. Together, they show low precision when evaluating who represents a real risk.

It is understandable, then, that reformists of different political currents consider the use of algorithms a possibility. If a machine can identify more reliability to low -risk people, the benefits can be substantial: less unnecessary arrests, better use of resources, and greater coherence in decisions. In addition, if the judicial system usually releases high -risk criminals, we must also seek new solutions to reduce criminal recidivism.

However, the same data that feed these tools frequently encode historical patterns of inequality and discrimination. If training data reflect a world in which Latin American black or migrants (in the United States) are more frequently captured – regardless of their real behavior – the algorithm can learn to associate race or ethnicity or neighborhood at risk. And, since these systems are usually owned by private companies, the public has no way to examine how predictions or use their use occur.

The risks are real. He software Facial recognition is much more wrong with people with dark skin than with white people. The “predictive police” tools, which anticipate where crimes will occur, tend to send patrols to the same neighborhoods that are already saturated with police presence, with the risk of deepening tense relations between communities and authorities. As the economist Giovanni Mastrobuoni demonstrates in his study on Milan, these technologies can improve clarification rates and reduce crime. But even well -intentioned deployments run the risk of reinforcing inequalities, if they are not accompanied by robust ethical and institutional frameworks.

Recent crime economy literature has shown that algorithms can be precise. But they must also be fair. Many models reproduce – and even amplify – the existing biases in the data with which they were trained, because these data reflect past human decisions: who stopped, who was processed, to whom he watched more intensely. Faced with this challenge, economists propose several strategies: build transparent and auditable algorithms; evaluate your performance not only for your precision but also for your equity; and, above all, establish democratic controls (oversight) about its use. As authors such as Jon Kleinberg have warned, there are no purely technical solutions to this problem: each algorithmic decision implies regulatory judgments about what mistakes we are willing to tolerate who we are willing to sacrifice in the name of security improvements.

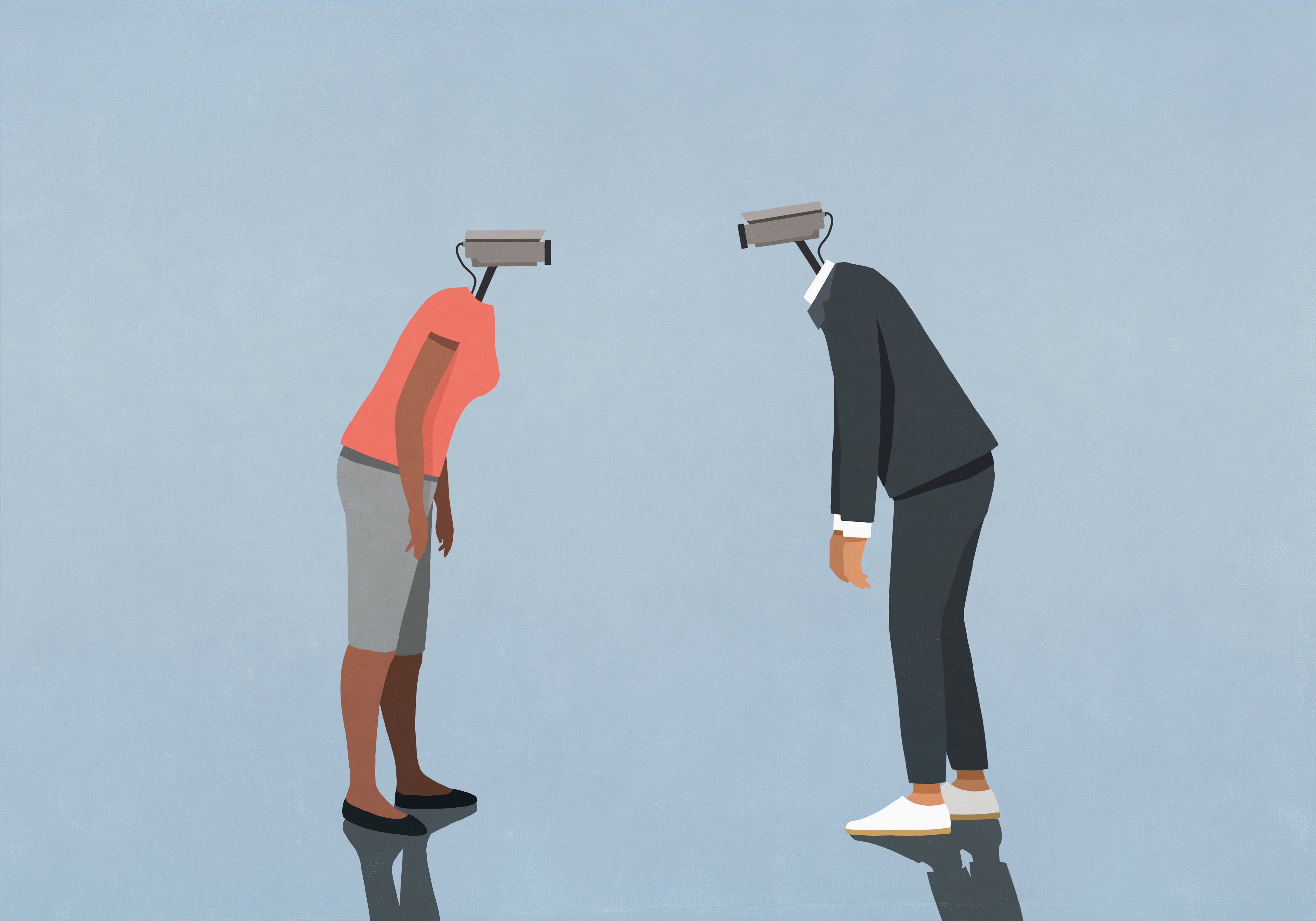

The expansion of the use of algorithms in citizen security forces us to face fundamental normative dilemmas: what are we trying to optimize – already a coast of what -? Any would be about algorithmic equity should also wonder who builds and who controls these systems. In several cases, algorithms operate under a utilitarian logic, prioritizing the interests of the majority over minority rights. This perspective prioritizes the aggregate results – less crimes, less victims – but usually ignore how those benefits and loads are distributed. More specifically, if a prediction tool reduces crime in general, but concentrates surveillance in a few marginalized neighborhoods, increasing the acts of police abuse there, should we deploy it?

The philosopher John Rawls argued that institutions must be judged not only for this type of results, but by how they affect the most disadvantaged. If an algorithm reproduces existing racial or socioeconomic hierarchies – although it is unintentional – it violates the liberal principle of equity and the equal moral value of all people, according to Rawls. It is not enough for an algorithm to be “accurate on average”, it must also be just in its treatment towards the most vulnerable. Although it sounds a bit abstract, these are not ethereal concerns. They are political decisions, encoded in lines of code, on what mistakes we are willing to tolerate and whose lives we are willing to put at risk and prioritize.

Often these decisions are not made democratically elected authorities, but private companies. For this reason, the growing role of large technology companies in the administration of public functions raises the problem of accountability. Many of the tools that are molding public security globally – from facial recognition to risk score systems – are developed and operated by private signatures. These companies are not subject to the same obligations as the State. Their algorithms are usually protected as industrial secrets, their contracts are not always public, and their models are rarely subject to independent audits and consulted by citizens. There is a real risk that several dimensions of public security end up defined by opaque systems that undermine the principles of equity and justice.

This is not a call to ban the state use of algorithms. In fact, the magnitude of security challenges in Latin America demands that we explore multiple tools to reduce crime and violence. I am not against its use. In my classes on crime and citizen security at the University of Los Andes, I propose interventions such as DNA databases. Algorithms to predict criminal recidivism and the use of cameras in public spaces are interesting tools in countries such as Colombia. But the tools and their use must be compatible with our values. We must take the debate on how to govern its use, both in court and in the streets. This implies establishing clear rules of transparency, financing for impact evaluations (which would allow monitoring of adverse effects) and supervision by independent institutions. Above all, it requires a democratic conversation about what we want these technologies to do – and what we cannot allow to do.

I urge the candidates for the presidency of Colombia to face these issues directly and responsible. Algorithms can help mitigate hidden sources of discrimination in the provision of justice and security. But, if they are implemented without adequate controls, historical inequalities can also reproduce or generate new forms of injustice.

For more updates, visit our homepage: NewsTimesWire